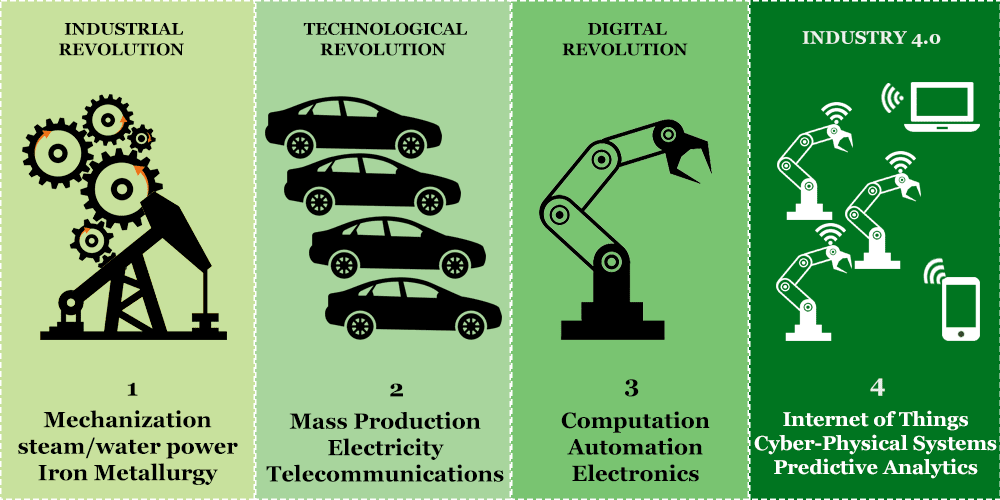

Industrial Manufacturing Generations

The Industrial Revolution

The Industrial Revolution started in the 1760s with the shift from simple hand-based manufacturing to water & steam-powered machines and chemical processing: at this time, factories, as we know them today, started to emerge. This heralded one of the greatest changes in human history, having significant impacts on every aspect of life and introducing sustained growth to a long-stagnant world economy.

Defining Innovations:

- Mechanization: The Textile industry was the principal driving force for many of the modern manufacturing methodologies we still employ to this day. Steam-powered machines spun cotton thread at superhuman speeds and powered looms increased worker efficiency dozens of times over.

- Power: Coal became the power source of the first industrial revolution. During the second half of the revolution(the early 1800s), the fuel efficiency of steam engines improved nearly 10-fold, and the invention of high-pressure steam engines with great power: weight ratio opened the door for transportation usage.

- Metallurgy: Improvements in furnaces, fuel, and processes drastically lowered costs, increased capacity, and output of iron which was the key building block for machines, tools, and infrastructure.

- Chemical: Essential chemicals necessary in various stages of manufacturing needed to be developed at larger scales to reduce costs.Example of innovation in this area: glass containers where very expensive at the time, this limited their size and made many chemicals like sulfuric acid difficult to manufacture in large quantities, this was solved by lining containers with lead so the acid could be manipulated in larger quantities.

The Technological Revolution

Following a several-year decline in game-changing inventions, the second industrial revolution began around 1860, where simultaneous improvements in steel & iron, power generation (steam, coal) and railroads, enabled mass production and drove the widespread adoption of infrastructures like telegraphs, railway networks, electricity, assembly lines and supply lines(gas, water). The technological revolution ended at the start of the 20th century, where factories began utilizing electricity and production lines starting world war 1.

Defining Innovations:

- Power: Electric power generation, manipulation and transmission technologies developed for industrial manufacturing long before it hit consumers. Here electric lighting significantly improved worker conditions by lowering pollution and excess heat from gas-based lights and removed the expensive fire hazard altogether. AC induction motors were invented in 1890 and put to use in factories 30 years before arriving in consumer households.

- Mass Production: Mass production continued to build on economies of scale and made the mass production of complex objects like cars and household appliances possible. By dividing labor, assembly lines were able to massively improve efficiency and reduce mistakes.

- Metallurgy: Iron proved too weak for railroad usage and advances in the field of metallurgy allowed companies to mass-produce large quantities of steel at lower costs, driving subsequent deployments of railroads and infrastructure.

- Transportation: During this period the united states laid more than 85,000 miles of railroad(a record which holds to this day). This set the stage for a myriad of new applications and stabilized the supply of raw materials which was key for sustaining the huge momentum at the time.

- Telecommunications: The expansion of telegraph networks and the installation of undersea cables between f=France and Britain enabled companies to make business transactions like never before.

The Digital Revolution

Marked by monumental changes set in motion by the move from mechanical/analog systems to digital electronics systems. this all began in the late 1950s and is in fact still going on to the present day. Logical circuits were implemented in all types of industrial and consumer devices; were computers, cell phones, and the Internet all played key roles in driving technological innovation.

Defining Innovations:

- Computation: The invention of the transistor in 1947 paved the way for modern computers as we know them. Through the years computers made their way into homes, governments, factories and eventually began replacing analog record-keeping systems(pen/paper) to the extent that today, 99% of information exists in digital form.

- Automation: The introduction of programmable digital logic circuits created the possibility for intelligent systems, able to automate tasks to new levels. Robotics, sensor technology and computers are increasingly able to perform tasks faster, more efficiently and better than humans could ever hope to.

- Communications: With the Incredible amount of digital information accumulating, the ability to access, update and inquire new data over large distances became vital. Computer systems can now communicate at near lightspeed, perform logistics and control companies with 100,000’s of employees, assets, and devices. The internet has seen such widespread adoption that many people are open to the idea of it as a human right.

Industry 4.0

A revolution in its infancy, major technological innovations in software, artificial intelligence and analytics have come together to create complex self-sustaining systems that exist both in the cyber realm and physical world, these are known as CPS. These systems have the potential to change manufacturing as we know it, further eliminating human elements along the way.

Developing Innovations:

- Industrial Internet of things: The main driving force behind industry 4.0, the internet of things is spreading to all facets of society, and industrial processes and systems can make use of these technologies to great extent. Here it can be used for asset tracking, machine health monitoring, on-demand production, inventory control and much more.

- Cyber-Physical Systems: Intelligent self-sustaining machines made with actuators, sensors and complex software are poised to bring about a new level of automation. They will be able to predict wear & tear, simulate adjacent component degradation and even perform self-maintenance.

- Artificial Intelligence: Using advanced machine-learning algorithms, engineers can utilize technology together with large data-sets to provide machines with the capacity to learn and streamline processes.

Modern Technologies

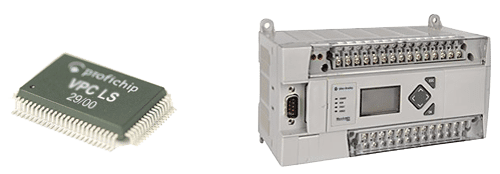

PLC

A programmable logic controller is a type of micro controller using boolean gates to control machines. These were and in many cases still, are the building blocks for automated systems.

Modern PLCs have memory, a processor and i/o, one can think of them as minicomputers embedded in systems that control its functions. They can carry out preprogrammed instructions like arithmetic, counting, timing, communication, trigger actuators and manage sensors in industrial machines. Programming PLC’s is different from more complex languages like C, C++, and C#, instead of simpler languages like ladder diagram, structured text, instruction list, function block diagram, and sequential function charts are used.

PLC’s vary widely in size, capability, and I/O. While Chip-sized micro PLCs can control up to 32 I/O, Larger PLC’s can control thousands of devices.

PAC

PLC’s have been steadily evolving beyond their limited functions, and indeed many gave blurred the line between logic controllers and personal computers. PAC, short for Programmable automation controller, came about as a term to better define more advanced logic controllers. They are not only capable of using more traditional programable logic controlling but include general computing processors which allow the use of more powerful languages like C. Another differing trait from advanced PLCs is the use of modern Ethernet communications.

IPC

IPC or Industrial PC’s where first used for industrial automation in the early 1980s. They didn’t gain much favor then due to the use of inadequate hardware(I.E Rugged, wide-temperature range) and software( buggy operating systems interfered with stability). Nowadays with the usage of real-time kernels and ruggedized purpose-built hardware, they are starting to replace PLCs and PACs in many applications due to their flexibility, power, and cost.

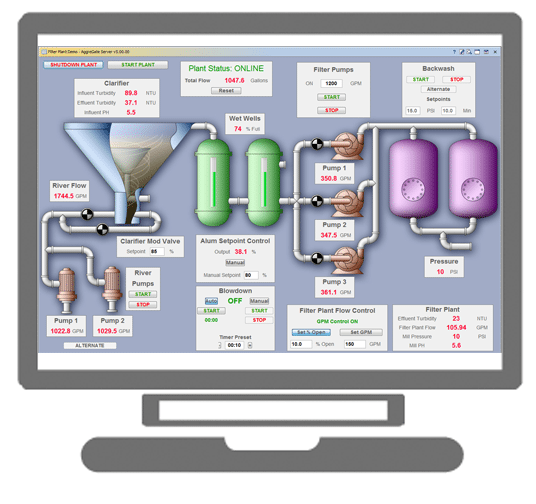

HMI

A human-machine interface is a device used to represent machine information in a readable manner to human operators. This allows them to easily visualize control processes and interact with the machine. For industrial purposes, functional color schemes are adopted and usability is valued over form/looks.

SCADA

Supervisory Control and Data Acquisition are used to centralize data, supervision, and control so as to easily monitor and control these complex systems from endpoints(PC workstation, smartphone). Data from DCS/PLC’s/PAC’s is used to quantify the visual representations. SCADA is used to supervise, control and monitor industrial networks and verify systems integrity/security.integrity/security.

DCS

Distributed control systems utilize a segmented architecture, dividing up the controllers into more manageable sections. This is a cost-effective solution for large systems with thousands of I/O; while very PLC’s can also work, the lengthy cabling and other factors make a DCS approach more viable.

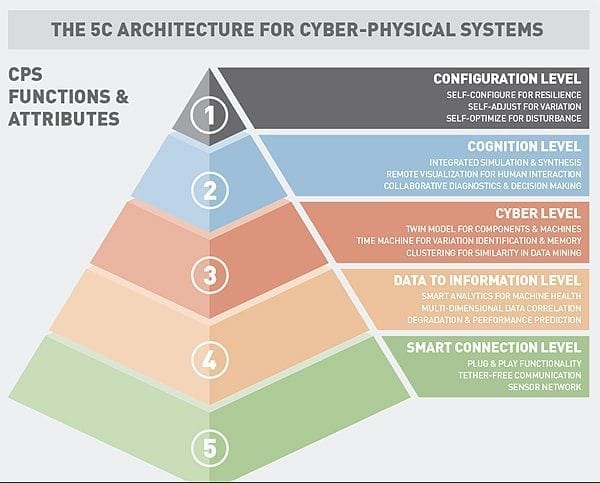

CPS

By implementing sensors, actuators and complex virtualization software, machines are slowly blurring the line between the physical and virtual realms. Taken even further and a large innovation driving industry 4.0 are cyber-physical systems. These systems take techniques from virtualization, predictive analytics and bid data to perform accurate self-simulations.

IIoT

By implementing internet-enabled technology on everything from machines to the products they create, automated systems can track, manage and orchestrate manufacturing much more efficiently. IoT technology can be placed on manufactured assets, other assets like vehicles/machines and even personnel to control access. IoT enabled sensor networks can be used in smart factories to gather readings on humidity, temperature, vibrations and much more.