In their first all-digital Embedded World Conference 2021, Embedded Computing Design has chosen Lanner’s NGC-ready Edge AI Platform as one of the winners singled out for “Best in Show” in the flourishing AI/ML industry. Lanner’s (LEC-2290E) Edge-NGC-based solution was conceived and built to meet the growing, inevitable challenge faced by faster, smarter AI solutions and the physical limits of the dominant cloud and corporate network investments, industry standards, and existing IT workflows.

Even just marginal improvements today that help scale, manage and accelerate AI deployments are highly valuable for incipient and future solutions. The global race towards AI & Machine Learning is on the verge of developing into a runaway AI accelerating arms race, with power-efficient embedded and powerful edge hardware currently at the frontlines. What will undoubtedly be considered rudimentary AI software in just a couple of years, is today already severely limited if not untenable without powerful close proximity/edge processing.

Thankfully, the vast diversity of innovative, useful, and highly desirable AI solutions have embraced open collaboration and mutually beneficial standards with a pragmatic consensus to build solutions that leverage existing cloud infrastructure, skill sets, and workflows. Opening the door to further simplification, integration, and pre-validations for solutions that circumvent inexperience, risks, and costs in the often necessary jump to distributed/edge computing. Today you can easily depend on existing talents, workflows, and infrastructure with our validated edge computing system ready for NGC containers and their widely used software, stacks, and frameworks/integrations to leverage existing IT capabilities.

Circumvent long times, costs, and unexpected hurdles in remote edge deployments, developments, and management.

Lanner offers its low-maintenance, reliable, flexible, and tested HW solution built with open standards and compute for a wide-range of powerful AI & parallel workloads with its NVIDIA T4 Tensor Core GPU.

Quickly test, deploy and update solutions from an extensive catalog of both proprietary and open-source software containers to focus on your main solution and expertise. All offerings are vetted and tested by NVIDIA under their NVIDIA GPU Cloud (NGC) Catalog initiative, edge hardware passing NVIDIA’s testing process such as Lanner’s is classified as “NGC-ready” specifically in edge deployments.

Featured Product.

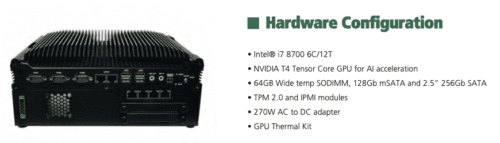

The LEC-2290E is purpose-built AI hardware while using hardware architectures and management features that traditional IT professionals have experience with. It accelerates AI workloads at the edge using the Tesla T4 Tensor Core GPU with an outstanding performance. Additionally, the NGC-ready appliance also provides an easy-to-deploy cloud-native software stack. The NGC uses a robust catalog consisting of containers, pre-trained models, Helms charts for Kubernetes, and AI toolkits with SDKs.

The NGC-Ready Edge Appliance:

The LEC-2290E has passed a wide set of tests that validate its ability to deliver high performance when running NGC containers. Such tests include:

- Single and multi-GPU deep learning training. Tested with TensorFlow, PyTorch, and NVIDIA DeepStream SDK.

- High-volume throughput and low latency AI inference. Tested with NVIDIA TensorRT, TensorRT Inference Server, and DeepStream SDK.

- Application development. Tested using the CUDA Toolkit, which is a parallel computing platform and programming model that enables dramatic increases in computing performance.

LEC-2290E is also highly scalable, performance-optimized, and built with security. It also features.

- Processing Power: Intel® i7 8700 6C/12T.

- For storage: 64GB Wide temp SODIMM, 128Gb mSATA and 2.5” 256Gb SATA

- Secure hardware through integrated cryptographic keys: LEC-2290E supports TPM 2.0

- Management and Monitoring capabilities: LEC-2290E supports IPMI modules

- Operating Temperatures: -20°C~45°C + GPU Thermal Kit (Passive Thermal Solution).