According to Gartner’s Data and Analytics Leader’s Guide to Data Literacy, “by 2020, 50% of organizations will have insufficient AI and data literacy skills to realize real business value”

The other 50% of the organizations within any industry will have data literacy, smart algorithms, and high-resource computing power to provide real value to the business. Artificial Intelligence and Machine Learning will help any organization evolve to the fourth industrial revolution.

But having advanced AI and ML models is not enough. AI without data is nothing, and AI without computational power is impossible.

In this article, we will describe seven ways the AI will impact Industrial Automation in 2020. These are AI technologies that are already taking off, such as computer vision, collaborative robots, or reinforcement learning, and that will impact the industry in the year 2020.

Discover Insights from Data with AI

“Data is the new oil. It’s valuable, but if unrefined it cannot really be used. It has to be changed into gas, plastic, chemicals, etc. to create a valuable entity that drives profitable activity; so must data be broken down, analyzed for it to have value.” said Clive Humby, a British mathematician and entrepreneur in the field of data science.

What’s the point of having tons of terabytes of data stored?

Data is pretty much useless unless we make some sense out of it. Indeed, having lakes of data is important but being data literate is far more important for unlocking the real “oil” value of data. And the more data we have, the more valuable the analysis can be.

The industry generates tons of valuable data in a single day. Logs from the line of manufacturing, temperature sensors, video, audio, etc. With the right Artificial Intelligence models in place, all of this raw data can be turned into valuable insights. New insights can lead engineers or designers into discovering new ways to improve a product, the assembly line, or even learn about trends.

Empowered by AI, professionals within any industry will be able to make faster and better decisions. The following industries can benefit from insight discovery:

- Mining, oil, and gas.

- Transportation.

- Manufacturing.

- Hospitality and food services.

- Construction.

- Finance and Insurance.

- Scientific research.

Improve Services and Products through Computer-Vision

Computer Vision, a field within AI, attempts to replicate the sophisticated functionalities of the human vision and extract some valuable information from images and video. It uses machine learning and neural networks to identify and process objects in videos or images.

Below is a picture of how computer vision identifies objects in an image.

In order to work, Computer Vision relies on three elements, the visual data, smart algorithms, and high-processing computers.

Computer Vision has been operating in its lowest capacity until recently. But now, Augmented Reality apps like Snapchat, are beginning to embrace automation through computer vision. Below are some of the most common improved products/services thanks to computer vision.

- Self-driving cars: The automobile industry is one of the pioneers of computer vision. Self-driving cars are a great example of computer vision in practice. This technology helps cars make a better sense of what’s happening around them. The cameras located around the car, feed the central computer with raw real-time visual data. This computer runs computer vision algorithms to process the images and find obstacles on the highway and even read traffic signs.

- Face Recognition: A picture of a user is taken and sent to the computer to run computer vision algorithms on facial biometrics and compare it against a database with face profiles. Aside from finding criminals, face recognition can be used to improve security in products and services. Consumer devices such as mobiles, door lock systems, health devices, and more, can benefit from the security brought by facial recognition.

- Mixed Reality AR/VR: When using mixed reality (AR/VR) applications like Pokemon Go, Computer vision is the one responsible for overlaying virtual objects into the physical world shown on the screen. Advanced computer vision algorithms can improve these services by identifying planes such as streets, or tabletops during the video. Identifying these planes, helps mixed reality applications deal with dimensions and depth.

Enhanced Data-driven Deep Learning for Smart Manufacturing

It all started with the popular lean manufacturing techniques developed by the Toyota Production System (TPS). This system relied on the uninterrupted measurement and statistical modeling of a massive amount of processes.

As the data gathered from these processes started to grow, the manufacturing facilities were able to feed it to high processing computers that had Machine Learning (ML) models. Today we have Deep Learning (DL) which plays a key role here, as it can effectively deal with non-linear data patterns. Deep learning uses machine learning techniques that are based on artificial neural networks. It can extract high-level insights from raw data inputs.

Machine Learning and Deep Learning will help the smart manufacturing plant:

- Improve the quality control for the assembly line.

- Improve product quality with anomaly detection and process monitoring.

- Enhance machine maintenance with predictive analysis.

- Help with capacity planning.

The Open Visual Inference and Neural network Optimization toolkit (OpenVINO), is an example of the efforts being made for improving neural network performance for any Intel processors. This toolkit helps with computer vision applications that rely on neural networks for the processing and analyzing images.

Safer Robot Collaboration and Productivity with Cobots

Amazon took a big leap in the automation industry when they acquired the warehouse robotics Canvas Technology startup, in early 2019. Amazon was able to build new warehouse bots thanks to sophisticated computer vision powered by Canvas Technology.

The new and improved autonomous system is already allowing Amazon warehouse workers to work safely alongside Collaboration Robots (Cobots). The technology is also helping track every movement of every product in the warehouse, which intends to maximize efficiency and productivity.

Cobots play a big role in industries such as manufacturing or laboratories. These robots are intended to work alongside humans, as opposed to the caged big industrial robots, which can be dangerous. Cobots are autonomous systems that can pick and place items, pack them, inject, take analysis, and do a lot more. The power of the Cobots is limited to avoid accidents. They also have monitored stops and can keep track of motion and speed.

These robots can be configured with so much precision that they can even sketch!

Collaborative Robots are empowered by Artificial Intelligence, Machine Learning, and computer vision algorithms to make them fast decision-makers. Cobots will not replace the human workforce, they will only help perform monotonous tasks that require high precision.

Improving Decision-Making Robots with Reinforcement Learning

Reinforcement Learning (LR) is a state-of-the-art machine learning technique that attempts to train ML models for advanced decision-making and strategy-learning. When a model goes through reinforcement learning, it uses trial and error to find a solution to a complex problem. In other words, the machine gets rewarded or punished for the actions that it takes to achieve a goal, which is a final reward.

These ML technique has been widely adopted by the gaming industry. In one case, an RL model was used for playing the advanced RPG old video game, Abbey of Crime by itself. The model was capable of taking decisions, learning, and completing the game successfully.

Minecraft, a modern popular video game, organized a competition called MineRL, which encouraged players to program RL models to play the Minecraft game. AWS is also organizing an autonomous Robocar competition, which promises to improve self-driving cars through ML and RL models.

RL helps a machine to learn to perform tasks without knowing much about how to do them at first. When reinforcement learning algorithms run through a massive number of problem-solving quests, the model/machine will attain incredible skills.

Aside from games, RL will also shape other industries. For example, a robot programmed with RL situated in an unknown maze-like environment will observe, navigate, and learn through the process. The next time the robot goes through the maze, it will be able to make automatic decisions that were previously learned.

Taking Machine Learning to the Edge with AI-enabled Chips

In a typical server/client communication model, the server holds much of the computational, storage, and networking capacity. A clear example of this is cloud computing. The cloud has the infrastructure and services to run AI and ML algorithms over data and then send back the results. While this is a great solution for those with access to high-speed Internet and a reliable connection, it is unattainable for those in remote areas.

So instead of relying on a high-processing computer deployed at the cloud or in the core network, AI can now be taken closer to the end-user, or to the edge.

Edge computing will distribute and bring the power of the cloud closer to the user. In edge computing, all data is handled, processed, transmitted by tons of devices. Using this type of computing will enable ML and AI capabilities at the edge. Having access to intelligence without cloud-based AI services will benefit any industry, especially those operating in remote areas.

Some use cases of ML taken to the edge?

An example of a device that can take Machine Learning to the edge is the Intel’s Movidius Neural Compute Stick. This small device enables fast prototyping and deployment of Deep Neural Network applications at the edge. It uses a Vision Processing Unit (VPU) architecture, which is an AI-optimized integrate chip for accelerating computing vision based on neural networks.

The stick is as small and simple as a USB drive.

This Intel stick is already being used around the world in sanitation and the healthcare industry. For example, an AI system that detects harmful bacteria in water in real-time, without needing a connection to the cloud. Another use case of this stick was a skin cancer scanner in real-time.

Predictive Analysis Empowered by Deep Learning On-prem Platforms

Traditionally, an ML algorithm trains a model using a given labeled and structured data set. After some time, the model learns from the data and predicts the data sets coming in the future. Deep Learning takes this same concept but uses unstructured data without any preparation. Deep Learning models can also be given data such as images, video, or audio. This is why deep learning is crucial for speech and image recognition.

Deep learning depends on three different factors; tons of data, intelligent algorithms, and GPU to accelerate learning.

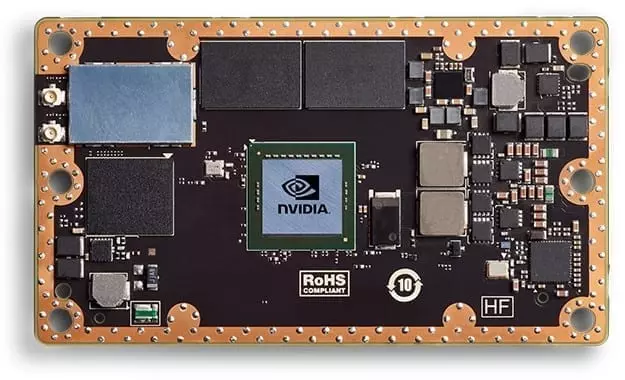

The Graphics Processing Unit (GPU) acceleration is a type of computing that uses advanced GPU and CPU to processes intensive deep learning and analytics operations. It’ll take all that data, run analysis, and predict trends. An example of this is Nvidia Jetson GPU, for high performance AI at the edge.

The GPU Accelerated computing is growing in popularity in different industries. Predictive maintenance will help inform when certain machinery needs to be attended. This will be helpful for the Automotive, Manufacturing, Logistics & Transportation, Oil & Gas, and Utility industries.