Notably, Intel ONP is not specifically about a certain server product. Rather, it is an architecture design concept that can support SDN and NFV to provide flexible and agile network services for virtualization environments. Intel ONP may also indicate a criteria set that meets the performance and architectural requirements for the network industry.

Intel has actually defined the criteria for an applicable ONP architecture:

- High-end Intel®-driven Hardware Platform:

The hardware platform must be driven by high-end processors, such as Intel® Xeon® processors, 10/40 GbE Ethernet controllers and particular Intel acceleration technologies such as Intel® QuickAssist, Intel® I/O Acceleration Technology, and Intel® Virtualization Technology features, in order to reliably run multiple open source software solutions and virtual machines middle wares for virtualized high-volume applications. - Assured Compatibility with Open Source, Open Standard Software:

The core value of SDN is to compatibly integrate open source, open standard software tools into a high-end server machine. Several widely adopted open group elements are OpenStack*, OpenDaylight* and Open vSwitch*. The support of these software elements will enhance the agility and flexibility in network services. - The Support of Intel® DPDK Technology:

The hardware platform must support Intel® DPDK (Data Plane Development Kit) to effectively handle network packets. - Multi-node Capability:

As discussed earlier, the server machine must possess multi-node capability in order to save ownership cost in fixed equipment, while delivering more scalable services. As conceptualized by Intel, the ONP-ready machine must meet the requirements in the diagram below. Each layer of the architecture is supported by open software such as OpenStack*, OpenDaylight* and Open vSwitch*.

Introducing Lanner’s HCP-72i1

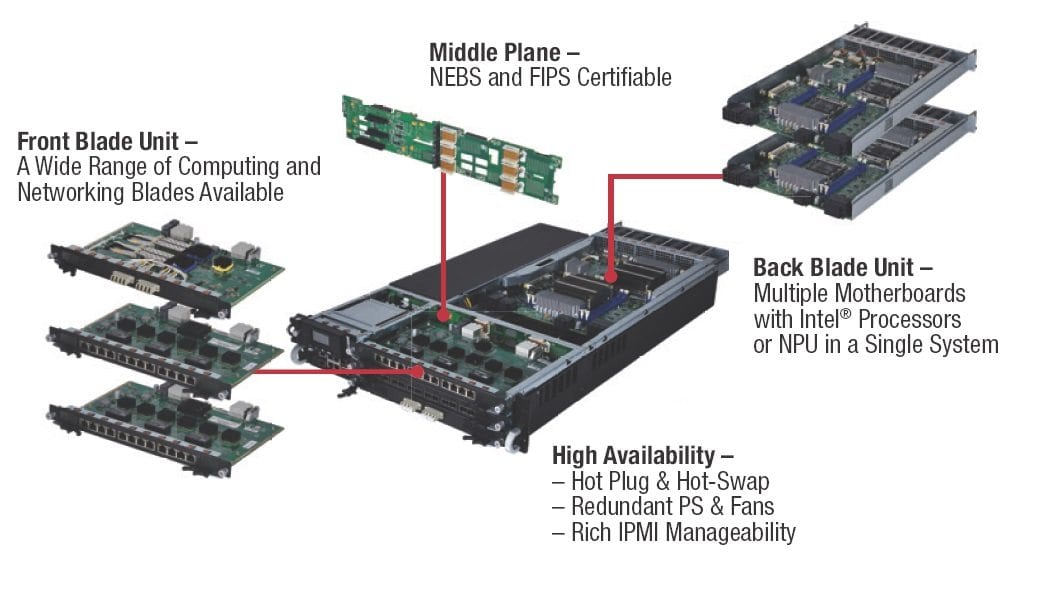

Lanner’s HCP-72i1 is built with our exclusive HybridTCA architecture for higher flexibility and customizability than traditional ATCA design, in order to meet the challenging demands in future telecommunication and data center applications. The HybridTCA modularized design offers higher interconnectivity, RAS (Reliability, Availability and Serviceability), and integration that consolidate data management, network computing, and packet processing into a single platform. Regarding physical reliability, HCP-72i1 is carrier grade network appliances with NEBS level 3 compatible design, instantly applicable in telecom deployments.

Under HybridTCA structure, the network appliances must support the following features:

Dual Intel Xeon E5-2600 series Driven Processor Motherboards

HCP-72i1 is designed with dual motherboards featuring two Intel® Ivy-Bridge Xeon® E5-2600 v2 CPUs on each board, and accompanied by Intel C604 chipset for performance optimization. To bridge the communications between the two CPUs on each board, HCP-72i1 is implemented with Intel QuickPath Interconnect with link speeds up to 20GT/s (bi-directional).

Regarding the dual motherboards, the interconnection between these two boards is enabled and enhanced by the CPU-inherent NTB (Non-Transparent Bridge). This dual board design with the inherent NTB allows HCP-72i1 the optimal bandwidth in SDN and NFV related environments.

Modularized Architecture

Based on HybridTCA architecture, HCP-72i1 is designed to integrate control plane and data plane into a single 2U appliance. In fact, every element is customizable. For instance, while an AdvancedTCA platform limits each board to 200W, a HybridTCA platform can offer four CPUs with a thermal budget of 130W for each CPU. This will deliver a higher efficiency and lower cost of ownerships for infrastructure management.

HCP-72i1 differs itself from conventional AdvancedTCA architecture by using a middle plane, which seamlessly connects each part of the system without cable connections. The middle plane of Lanner HTCA platform is flexible for customization based on the proposed functionalities of the motherboards as well as the number and interconnectivity of the I/O blades.

Expandable Network I/O Blades

HCP-72i1 supports up to 3 network I/O blades. With the expansion, HCP-72i1 can support up to 36 x 1 GbE LAN ports or 24 x 10 GbE LAN ports in a selection of RJ-45 or SFP ports. The expansion will enhance network throughput and bandwidth.

Intel DPDK

To meet the packet processing needs promised by Intel ONP, HCP-72i1 is programmed with Intel DPDK to achieve optimal performance in packet handling.

Other important functions of HCP-72i1 include IPMI, redundant power supplies, replaceable cooling fans, HDD/SSD bays, hinged LCM display, and USB/Serial connections.

*Other names and brands may be claimed as property of others.