Emergency services are rapidly evolving to catch up with modern technologies like Augmented Reality and smartphone advanced mobile location (AML) services.

Critical services are making use of a nationwide critical access network that’s been in development since 2012, built exclusively for emergency services under the name FirstNet. This network is set to enable the mission-critical voice-services necessary for emergency communications this year (2019). Along with these essential services, there is a growing need for distributed computing architectures that will enable machine learning software with the environment it needs to empower first responders and disaster workers.

Critical analytics require time-sensitive data processing optimally making extensive use of distributed/fog computing to meet the needs of real-time analytics solutions.

Given the massive amounts of sensory data feeds from cameras, drones, satellites and emergency workers themselves (body cams, bio-monitors, location trackers) a capable on-premises computer is ideal for processing this data.

The solution needs to to be efficient at collecting and processing real-time feeds to provide minute-by-minute reports, critical updates and actionable intelligence. Analytics and machine learning algorithms of this level will require substantial storage, networking & computing capabilities at the edge, due to the improved accuracy low-latency analytics can provide.

It goes without saying that Data Centers and hyperscale cloud services offer the most cost-efficient networking and computing infrastructure. Yet in emergency scenarios where seconds can be the difference between a life or death disaster, having rapid and actionable analytics is the priority, especially first responders.

By harnessing the distributed power of edge computing ie: onboard vehicle computers and resources at the network edge (access network, CORD) algorithms can effectively process time-critical analytics and generate timely reports without running into bottlenecks further down the network.

With this capability first responders can benefit from reliable, speedy real-time intelligence. traditional cloud-computing meanwhile can be run in parallel for deeper long-term insights from compute/data-intensive analytics and artificial intelligence.

How 5G & Real Time Edge Analytics are Evolving Emergency Response Services

Cloud computing has been effectively used in many modern services due to its hyper-scalability and flexibility. The massive storage, networking and computing capabilities it affords enable thorough analytics and reporting for professionals.

However, there are several use-cases where the higher latency in round-trips to datacenters can make the difference between life and death — especially the case for emergency responders. Current-gen augmented reality headsets are expensive and rudimentary in capabilities, something edge-computing looks to tackle.

Just as centralized computing has its limitations, so do traditional analytics reports like charts, graphs and maps. Humans can only process this information so quickly, this is why Augmented reality will go hand-in-hand with real-time edge analytics. AI can quickly process information into actionable bits to overlay into the FoV, enabling quick responses to time-critical tasks.

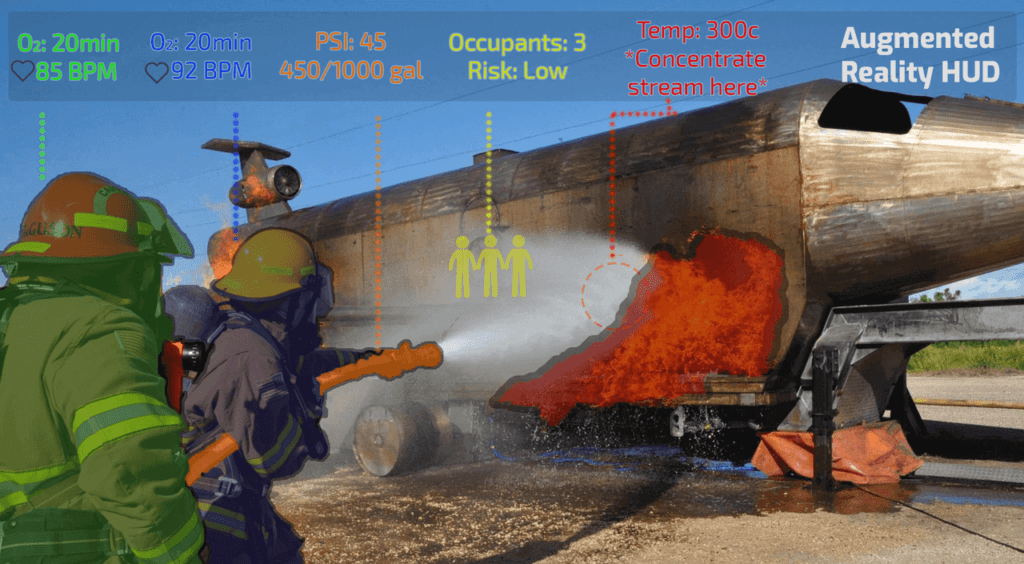

Firefighters are a great example, as they depend on a constant stream of information such as analytics on structural integrity, floor plans, hazards (bio, physical), physiological status and operational data like the safety points, occupancy and priorities for people in danger.

Location tracking & Traffic Navigation

5G wireless networks by design are dense compared to 4G/LTE, this enables technologies like highly efficient positioning (better that GPS) while using less battery overall. Combined with real-time traffic monitoring capabilities, highly accurate ETA’s can be calculated.

Intelligent traffic systems (ITS) will make extensive use of 5G for traffic management, taking a proactive role for emergency vehicles like firetrucks and ambulances.

With autonomous vehicles on the horizon, driverless fire trucks can make extensive use of 5G’s positioning capabilities to plan effective routes and reduce accidents in high-speed scenarios.

Situational Awareness

Sci-Fi movies and Video games games are great windows into the possibilities for technology’s usage, especially video games as they use technology to empower the user with abilities and enhancements.

While humans cannot hold a candle to the data-processing, number-crunching prowess of computers, we do excel at processing visual information even at a glance. Though AR/VR is still in its infancy, rapid innovations in software are enabling many futuristic applications. With 5G on the horizon, the edge computing capabilities will enable things beyond current hardware & power (battery) constraints.

A great example are HUD’s (heads up displays), which are standard in most first-person shooters due to useful monitoring features for: resources, bio-status and mission-statuses. Most of this is already achievable with current-gen technology.

AR/MR takes that lesson and transforms analytics from spreadsheets and mission summaries into visual cues for humans, just like video games. Instead of opening reports and speed-reading through paragraphs for the actionable data points, AI can implant the critical information directly into the FoV (field-of-vision).

Instead of wasting valuable time studying the infrastructure and what lies beyond the users FoV, Mixed reality can allow the user to “see” behind walls, beyond the human eye. Machine learning can use cameras and other sensors to generate human shapes, portray accurately positioning using infrared readings, overlay structural blueprints, measure distances accurately at a glance using overlay grids, pointers and other visual cues.

AI and Data Enhanced Decision-Making

With robust 5G technologies the Augmented/Mixed reality that was used since the advent of first-person shooters to assist gamers will become a reality. Spacial/situational awareness technology will gradually take shape in our world as augmented reality.

With so much data at the tip of our eyes so to speak, great improvements are in store for real-time decision making. Firefighters will be navigating through hazards using extra-sensory information overlaid into helmets, assessing capabilities and limitations while ordering assistance on-the-fly.

This technology is set to evolve every industry, with the only restraint being a temporary one— time and cost. As technology continues its exponential growth, 5G will curtail the upfront investment in mobile hardware through edge computing.

5G wireless will soon enable:

Doctors with AR glasses overlaying X-rays, vascular maps.

Skilled repair technicians assisting onsite technicians remotely.

Soldiers enhancing their vision with extrasensory capabilities and enhanced awareness, decision making.

Firefighters easily counting occupants, analyzing temperature readings and calculating risks.

Super Ambulances

With medical care, it goes without saying that time is the most valuable resource. Given this, it’s no surprise emergency medical care is quickly shifting away from central locations like large hospitals, towards local clinics and highly equipped ambulances— super ambulances.

These vehicles will be equipped with massive technological and computational resources to speed-up diagnosis and analytics. Wireless networks (5G, 4G/LTE) will provide the data on patients, their files, intricate maps (X-ray, MRI, CT and vascular scans). Ruggedized, onboard computing systems will process patient-state along with these records and send in reports to medical data-centers.

The speedy appropriation and application of this data will greatly improve emergency medical care. In the near future the cost of cutting-edge medical augmented reality will soon be mitigated by harnessing the edge computing power, high-bandwidth and low-latency of 5G networks.

Effective Training

For years now expensive real-world training has been largely replaced with highly accurate simulators. With the growing prevalence of drones and autonomous vehicles, this is not going to slow down any time soon.

The merging of reality and physically accurate simulators have improved the effectiveness of virtualization in training programs, transmitting virtual capabilities to the real-world scenarios envisioned.

This can be seen with the extensive existence and commercial success of accurate simulators for trains, boats, planes and cars. So successful in fact the military uses common gaming peripherals such as Xbox controllers for its actual drones and robots as the humans are well-acquainted with their usage.